Table of Contents

bird bar

Bird bar is a bird feeder with a camera on it. A computer vision model watches the feed to identify the species of birds that visit the feeder. The video feed is also live-streamed to the internet, where live info about the birds is shown. Patron data is collected in a time series database for analysis.

Live stream: https://twitch.tv/thebirdbar

Stats: https://birdcam.qlyoung.net/

History

At the start of 2021 I received a window-mount bird feeder as a secret santa gift. As a bird lover I was excited to put it up and get a close up view of some of the birds that inhabited the woods around where I lived. Within around 3 days I had birds showing up regularly.

With the floor plan of my apartment at the time, the only sensible place to put the feeder was on the kitchen window; there was a screened porch on my bedroom window, or I would have put it there. Since my work desk was in my bedroom, this meant that I couldn't watch it while I worked. If that had been an option, the rest of the project may never have materialized.

Shortly after installing the feeder I had the idea to mount a camera pointing at it and stream it to Twitch, so that I could watch the birds while I was at my computer in another room. While watching I found myself wondering about a few of the species I saw and looking up pictures trying to identify them. Then it hit me - this is a textbook computer vision problem. I could build something that used realtime computer vision to identify birds as they appeared on camera.

Fast forward a few years and this has bloomed into a pretty large project, with multiple upgrades to both the hardware, software and feeder setup. It's definitely the most popular project I've made; my friends think it's cool. It's also served as a good test bed to keep up to date on advances in machine learning and accelerated computing.

Feeder

This section covers the evolution of the feeder construction & installation details.

v1

The first feeder was a generic acrylic bird feeder. The camera was an old webcam I had lying around. Since it's mounted outside it needed to be weatherproofed. I did that with plastic wrap. Subsequent attempts greatly improved the design.

Additional weatherproofing measures included a plastic tupperware lid taped over the camera as a sort of primitive precipitation shield.

Say what you will, but this setup survived a thunderstorm immediately followed by freezing temperatures and several hours of snow. All for $0.

I should also mention this installation is located on the second floor of my apartment building. Putting it up and performing maintenance involves leaning out of the window, so I was anxious to build a durable, maintenance free installation.

Hardware

As of 2026 birdbar runs on an Nvidia Jetson Orin Nano. With a TensorRT-optimized kernel and recent firmware upgrades to the board to unlock additional performance, classification takes about 50 ms/frame, achieving around 20 frames per second. Classification runs at 480×800 resolution.

In the past (2021 thru early 2026) the setup was an Intel NUC with a discrete PNY GTX 1070 connected via Thunderbolt 3 in an eGPU enclosure. It's wild that what used to take a dedicated computer and discrete graphics card has now shrunk to fit entirely on a SODIMM SoM package!

Webcam is a Logitech Streamcam.

Bird Identification

Background

I’d read about YOLO some years before and began to reacquaint myself. It’s come quite far and seems to be more or less the state of the art for realtime computer vision object detection and classification. I downloaded the latest version (YOLOv5 at time of writing) and ran the webcam demo. It ran well over 30fps with good accuracy on my RTX3080, correctly picking out myself as “person”, my phone as “cell phone”, and my light switch as “clock”.

Out of the box YOLOv5 is trained on COCO, which is a dataset of _co_mmon objects in _co_ntext. This dataset is able to identify a picture of a Carolina chickadee as “bird”. Tufted titmice are also identified as “bird”. All birds are “bird” to COCO (at least the ones I tried).

Pretty good, but not exactly what I was going for. YOLO needed to be trained to recognize specific bird species.

Dataset

A quick Google search for “north american birds dataset” yielded probably the most convenient dataset I could possibly have asked for. Behold, NABirds!

NABirds V1 is a collection of 48,000 annotated photographs of the 400 species of birds that are commonly observed in North America. More than 100 photographs are available for each species, including separate annotations for males, females and juveniles that comprise 700 visual categories. This dataset is to be used for fine-grained visual categorization experiments.

Thank you Cornell! Without this dataset, this project probably would not have been possible.

The dataset is well organized. There’s a directory tree containing the images, and a set of text files mapping the file IDs to various metadata. For example, one text file maps the file ID to the bounding box coordinates and dimensions, another maps ID to class, and so on.

Did I mention this dataset also contains bounding box information for individual parts of the birds? Yes, each of the over 48,000 bird pictures is subdivided into bill, crown, nape, left eye, right eye, belly, breast, back, tail, left wing, right wing components. It’s like the holy grail for bird × computer vision projects. For this project I did not need the parts boxes, but it’s awesome that Cornell has made such a comprehensive dataset available to the public.

While well organized, I’m pretty sure this is a non-standard dataset format. YOLOv5 requires data to be organized in its own dataset format, which is fortunately quite simple. To make matters easier, NABirds comes with a Python module that provides functions for loading data from the various text files. Converting NABirds into a YOLOv5 compatible format was fairly straightforward with some additional code. Cornell’s terms of use require that the dataset not be redistributed, so I cannot provide the converted dataset, but I can provide the code I used to process it.

Training

With the dataset correctly formatted, the next step was to train YOLOv5 on it. This can be somewhat difficult depending on one’s patience and access to hardware resources. Training machine learning models involves lots of operations that are highly amenable to acceleration by GPUs, and to train them in a reasonable timeframe, a GPU or tensor processor is required. I have an RTX3080 at my disposal, so it was easy to get started on my personal desktop.

YOLOv5 offers multiple network sizes, from n to x (n for nano, x for x). n, s and m sizes are recommended for mobile or workstation deployments, while l and x variants are geared towards cloud / datacenter deployments (i.e. expensive datacenter GPUs / tensor processors). The larger variants take longer to train and longer to evaluate. Since the model needed to be evaluated on each frame in a video feed, holding all else constant, for this project the choice of model size would ultimately dictate the achievable framerate.

Since the webcam demo with the s model ran at a good framerate on my GPU I chose that one to start.

Training certain kinds of machine learning models is memory intensive. In the case of YOLO, which uses a convolutional neural network (CNN), less GPU memory means fewer images can be processed in a single gradient descent step (the ‘batch size’), significantly increasing the time needed to train. It’s worth noting that training with a smaller batch size shouldn’t affect the final outcome too much, so patience can compensate for lack of GPU memory if necessary. In my case the largest batch size I could get on my GPU was 8.

Training YOLOv5s on my RTX3080 took about 23 hours to train 100 epochs with a batch size of 8. Each epoch represents one complete processing of the training dataset. General advice for YOLO is to use about 300 epochs. Since training typically involves a trial-and-error process of tweaking parameters and retraining, and I wanted to try using the m model, clearly the 3080 was not going to be sufficient to get this project done in the desired timeframe. This was a holiday project for me, I wanted it done before the end of the holidays.

I knew people use cloud compute services to train these things, so it was time to find some cloud resources with ML acceleration hardware. To that end I logged into Google Compute. Some hours later I logged into AWS. Some hours later I logged into Linode. At Linode, I had an RTX6000 enabled instance within about 10 minutes. To this day, I still cannot get GPU instances on GCP or AWS - and not for lack of trying. GCP seemingly had no GPUs available in any region I tried, and I tried several, each with advertised GPU availability. AWS required me to open a support case to get my quota for P class EC2 instances increased, which was denied after three days. I find it amusing that Linode is able to provide an infinitely better experience than Google and Amazon. Speculating, it seems plausible that perhaps AWS and GCP are being flooded by cryptocurrency workloads and Linode is not, or is more strict about banning miners. I have no insight into that. Overall, it seems cloud GPU compute is more difficult to get than I had imagined.

The RTX6000 allowed me to use a batch size of 32, which brought training within a reasonable timeframe of about 2 days for the m model at 300 epochs. Good thing, because at $1.50 an hour, those VMs aren’t cheap. A few days of training was about as much as I was willing to spend on this project, and the graphs indicated that the classification loss was on the order of 3% - more than sufficient for a fun project.

Note: Machine learning models are typically trained on some subset of the total available data, with the rest being used to evaluate the model’s performance on data it has not seen before. One of the metadata files provided with the dataset defines a suggested train/test split, classifying each image by its suggested usage. I tried training with the suggested split, and results were significantly worse than using a standard 80/10/10 (train/test/validate) split.

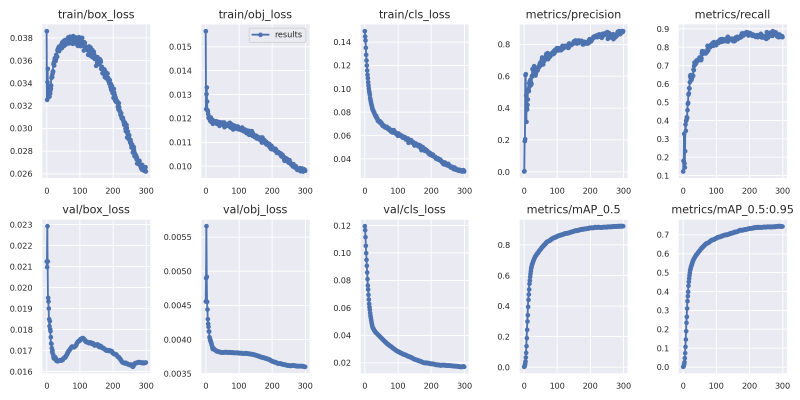

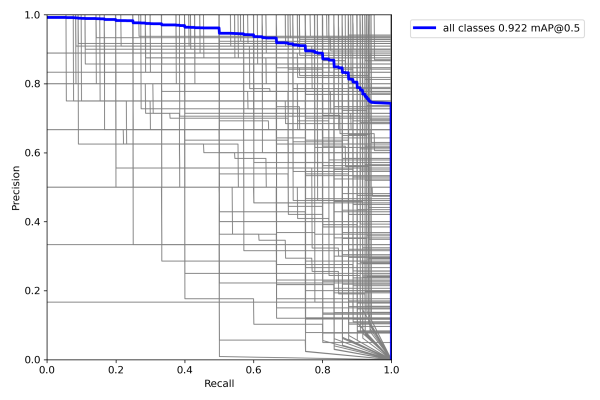

For the data oriented, here is the summary information for the training run of the model I ended up using:

These metrics are all good and show that the model trained very nicely on the dataset.

At this point after waiting for training to finish I was quite excited and ready to try it out. I ran it on a couple of clips from my bird stream and was amazed at the results.

Trying it on an image with three species of chickadee, that to my eye look almost identical:

I’m not sure if these were in the training set; I just searched for the first images of each species I found on Google Images.

The accuracy is impressive. YOLOv5 has certainly achieved its goal of making state of the art computer vision accessible to people outside that field.

If you’d like to use my models, they’re available here.

Video flow

Having demonstrated that it could identify birds with relative accuracy, it was time to get it working on a live video feed.

Even though YOLO is amazingly fast relative to other methods, it still needs a GPU in order perform inference fast enough to produce inference results for each frame of a video feed. I set a goal of 30fps; on my 3080, my final model averages roughly 0.020s per frame, sufficient to pull around 40-50fps. This is a good tradeoff between model size/accuracy and evaluation speed. The average with the s model looks to be roughly 0.015s, technically sufficient to pull 60fps, but without much headroom. There are spikes up to the 0.02 range, suggesting that 60fps would likely be jittery. The webcam I was using at the time didn’t really suffice for 60fps anyway.

So while I had a GPU that could perform inference fast enough, it was in the computer in my bedroom and I didn’t much feel like moving it into the kitchen. I considered several approaches to this problem, including:

- A very long USB extender to the webcam

I actually tried this, and it did work. The problem was that it required me to have my bedroom window open a little bit to route the cable through it, which isn’t ideal for several reasons. Also, when using YOLO's video detection script on a webcam device it produces annotated frames of size 640×640. I wanted to stream the full resolution of the camera (1920×1080). I briefly investigated patching detect.py, but the holidays were drawing to a close and I wanted a functioning stream sooner rather than later, so for the time being I resolved to use a video feed since the script produced annotated at the native resolution when given a video.

- eGPU enclosure attached to the webcam host

By this point in the project I’d replaced the laptop with a NUC I happened to have lying around. If this was a recent NUC with Thunderbolt 3 support, an eGPU enclosure would have been the cleanest and easiest solution. I wanted to avoid buying a new NUC and eGPU enclosure since together those cost quite a bit, and I didn't care to wait for delivery so I nixed this option.

- Some kind of network streaming setup

Advantages: no cables through windows, no new hardware. I have a pretty good home network and am pretty handy with this stuff so I went with that. After several hours experimenting with RTMP servers, HTTP streaming tools, and the like, I ended up with this setup:

I tried a bunch of other things, including streaming RTMP to a local NGINX server, using VLC as an RTSP source on the webcam box, etc, but this was the setup that was the most stable, had the highest framerate, and lowest artifacts. Actually detect.py does support consuming RTSP feeds directly, but whatever implementation OpenCV uses under the hood introduces some significant artifacts into the output. Using VLC to consume the RTSP feed and rebroadcast it locally as an HTTP stream turned out better. The downside to this is that VLC seems to crash from time to time, but a quick batch script fixed that right up:

:start taskkill /f /im "vlc.exe" start "" "C:\Program Files (x86)\VideoLAN\VLC\vlc.exe" rtsp://192.168.0.240:554/live --sout #transcode{vcodec=h264,vb=800,acodec=mpga,ab=128,channels=2,samplerate=44100,scodec=none}:http{mux=ts,dst=:8081/localql} --no-sout-all --sout-keep timeout /t 15 python detect.py --nosave --weights nabirds_v5m_b32_e300_stdsplit.pt --source http://127.0.0.1:8081/localql goto start

Why yes, I do have Windows experience :’)

I ran it that way for a couple months or so, but eventually the above setup proved too unreliable. It required running lots of software on both the camera host as well as my desktop, and since it used my desktop GPU for inference it limited what I could use my computer for (read: no gaming). Also, the stream went down every time I rebooted my computer.

After deciding that I wanted to maintain this as a long term installation I ponied up for a NUC and an eGPU enclosure. I initially tried to use the enclosure with an RTX 3070, but I couldn’t get it working with that card so I used a spare 1070 instead which worked flawlessly. The 1070 runs at about 25fps when inferencing with my bird model which is more than enough to look snappy overlaid on a video feed. The whole thing sits on my kitchen floor and is relatively unobtrusive.

60fps

Up to this point I was streaming the window with annotated frames displayed by YOLO’s detect.py convenience script. However, this window updates only as often as an inferencing run completes, so around 25fps. It doesn't look good on a livestream. It would be better to stream video straight from the camera at native framerates (ideally 60fps) and overlay the labels on top of it.

Doing this turned out to be rather difficult because you cannot multiplex camera devices on Windows; only one program can have a handle on the camera and its video feed to the exclusion of all others. Fortunately there is some software which works around this. I purchased that software and used it to create two virtual camera feeds. OBS directly consumes one feed and the other one goes into YOLO for inferencing. The resulting labeled frames are displayed in a live preview window. I patched YOLO so that the preview window, which normally displays the source frame annotated with the inferencing results, only displayed the annotations on a black background without the source frame. That window is used as a layer in OBS with a luma filter applied to make the black parts transparent. With some additional tweaks to get the canvas sizing and aspect ratio correct this allowed me to composite the 25fps inferencing results on top of the high quality 60fps video coming from the camera.

For encoding I use Nvenc on the 1070. That keeps the stream at a solid 60fps, which the NUC CPU can’t accomplish. Between inferencing and video encode the card is getting put to great use.

This was stable for over a year, until I decided to install Windows 11. What could go wrong?

Camera

The original setup used an off-brand 720p webcam wrapped in a righteous amount of plastic wrap for weatherproofing. Surprisingly the weatherproofing worked well and there was never a major failure while using the first camera. However, the quality and color on that camera wasn’t good and an upgrade was due. I already had a Logitech Brio 4k webcam intended for remote work, but it ended up largely unused so it was repurposed for birdwatching.

While the plastic wrap method never had any major failures it wasn’t ideal either. Heavy humidity created fogging inside the plastic that could take a few hours to wear off. It needed replacing anytime the camera was adjusted. Due to these problems and the higher cost of the Brio I decided to build a weatherproof enclosure.

The feeder is constructed of acrylic. My initial plan was to use acrylic sheeting build out an extension to the feeder big enough to house the camera. I picked up some acrylic sheeting from Amazon and began researching appropriate adhesives. It turns out most adhesives don’t work very well on acrylic, at least not for my use case – the load bearing joints between the sheets were thin and I needed the construction to be rigid enough to support its own weight and the weight of the camera without sagging. Since the enclosure would be suspended over air relying on its inherent rigidity for structure the adhesive needed to be strong.

The best way to adhere acrylic to itself is using acrylic cement. Acrylic cement dissolves the surfaces of the two pieces to be bonded, allowing them to mingle, and then evaporates away. This effectively fuses the two pieces together with a fairly strong bond (though not as strong as if the piece had been manufactured that way).

Three sides were opaque to prevent sunlight reflections within the box. Joints were caulked and taped the joints to increase weather resistance. I played around with using magnets to secure the enclosure to the main feeder body but didn’t come up with anything I liked, so I glued it to the feeder with more acrylic cement, threw my camera in there and called it a day.

This weatherproofing solution turned out great. It successfully protected the camera from all inclement weather until I retired that feeder, surviving rain, snow, and high winds over the course of the year.

Switching to Linux

As it turns out, the webcam splitter software appears to rely on some undocumented / unofficial Windows 10 APIs and does not work on Windows 11. I decided to bite the bullet and just put Linux on the NUC.

Similar to Windows, on Linux only one device can be reading from a camera device file at a time. Unlike Windows there is a very easy way to work around this called v4l2loopback. This is a kernel module that allows you to create virtual video device files that can be fed video from a userspace application such as gstreamer. These virtual device files support multiple clients.

tl;dr:

# load v4l2loopback module; this creates a few loopbacks sudo modprobe v4l2loopback # set desired parameters on loopback device /dev/video2 sudo v4l2loopback-ctl set-fps 60 /dev/video2 # pipe video from camera device /dev/video0 to loopback gst-launch-1.0 v4l2src device=/dev/video0 ! video/x-raw,width=1920,height=1080 ! videoconvert ! v4l2sink device=/dev/video2

After this, any number of clients can read from /dev/video2. I replicated my video + annotations compositing setup in OBS as described earlier without issue.

On the off chance this helps someone, here's how you set video camera parameters on device 0 (/dev/video0) from the command line:

sudo v4l2-ctl -d 0 -c focus_automatic_continuous=0 sudo v4l2-ctl -d 0 -c focus_absolute=75 sudo v4l2-ctl -d 0 -c backlight_compensation=0 sudo v4l2-ctl -d 0 -c auto_exposure=3

Around this time I also replaced the feeder since the last one was all scratched up and the acrylic had gotten all cloudy. I also replaced the cheap camera with a Logitech Streamcam duct taped to the feeder. I found that the Brio I was using didn't correctly handle gain adjustment so bright sunlight would blow it out. The Streamcam, which is much more affordable, doesn't have that issue.

You can watch the livestream here.

Birds

These were the first birds to appear and be identified live on camera. Congratulations.

In the case of sexually dimorphic species that also have appropriate training examples, such as house finches, it’s even capable of distinguishing the sex.

In a few cases, such as the nuthatch and the pine warbler, the model taught me something I did not know before. Reflecting on that, I think that makes this one of my favorite projects. Building a system that teaches you new things is cool.

Statistics

I was curious how many birds were visiting per day, what peak hours were and what the species distribution looked like. In order to get this information the first step was data collection, so I thought about how to best collect data on visitors. Conceptually I wanted to log each visitor along with some relevant data such as species, time of visit, length of visit etc. as a single record – one per visitor. However, this is one of those problems that seems easy but is actually quite hard. The classification algorithm runs once per video frame but there is no context retained between frames; it can’t tell you that the bird detected in frame N_1 is the same one it detected a moment ago in frame N_0. Extracting this sort of context is probably feasible, perhaps by combining some heuristics with positional information (is the bird of species X in this frame in the same position as bird of species X in the previous frame? If so, probably the same bird). However, this fell in the realm of “too hard for this project.” Instead of storing bird visits I decided to store the data in its elementary format as a time series of detection events.

I have some familiarity with time series databases, having used InfluxDB for a different project. Influx was nice for that project but I wanted to learn something new so I chose TimescaleDB this time around. Timescale is an extension to Postgres and I wanted to learn more about Postgres anyway so it was a good choice from a learning perspective. Since the plan was to insert a new record for every frame where an object was detected and the detection loop runs at about 25fps I was expecting a lot of data. I had read that Timescale handles that sort of scale a bit better than Influx, so that was another factor in choosing Timescale.

After setting up Timescale on a VPS it was time to design the database schema. I had a vague understanding of how relational databases worked and had written a few lines of SQL, but otherwise lacked database experience. After writing out a naive (in retrospect) schema it occurred to me that there was probably a way to do it right. I began learning about schema design and database normalization, a topic I found surprisingly interesting. This is the schema I ended up with, and while I don't think it's perfectly normalized it’s better than what I had initially.

Note the data for the feed present in the feeder. I thought it would be cool to correlate feed with frequency of species, but since I set things up so that changing the feed required a manual record insert I got lazy and stopped doing it so I don't have the dataset needed now.

After setting up the database on the server, I made some hacks in the YOLOv5 code to push detection data directly to the database whenever a detection occurred. After a short time I had tons of visitor records in my database. Next I wanted to answer questions like “what is the most common species over the last week?” and “what time of day has peak volume?“ To accomplish this I set up a grafana dashboard.

Then I thought it would be cool to show these graphs on the livestream. It turns out Grafana supports embedding individual graphs, and since OBS supports rendering browser views it was easy to get those set up.

I left these up for a while, but ultimately I felt they were taking up too much space in the stream so I took them down.

Thoughts

Overall I was extremely satisfied with the results of this project. The initial project took about 5 days to complete. I learned something at each step.

I can think of a lot of things to do with this project, in no particular order:

- Bot that posts somewhere when a bird is seen

- Retrain with background images to reduce false positives

Except where otherwise noted, content on this wiki is licensed under the following license: CC Attribution-Noncommercial-Share Alike 4.0 International

Except where otherwise noted, content on this wiki is licensed under the following license: CC Attribution-Noncommercial-Share Alike 4.0 International